Note: The first part of this blog was written before I'd tried Claude Code with the Opus 4.6 model. The second part was written afterwards.

A distinction

Types of "AI"

If you've read the FAQ page you may have got the impression that I'm not the biggest fan of "AI". Which is more-or-less accurate; I don't use it much in coding other than the auto-complete features in copilot (they're turned on by default), because when I've tried to use it for anything more complex it tends to hallucinate methods that don't exist and it's easier to write code that works myself.

So first, let's talk about types of "AI". There are complex models that are highly specific to specialised fields (or even individual problems); these have good use cases, and have already resulted in things like better cancer detection and complex mathematical proofs (These are external sites and I have no control over their content, click at your own risk etc).

There are also things like Fireflies.ai, which is an assistant for virtual meetings that takes notes for you. It garnered some controversial publicity recently when one of the founders revealed (apparently on purpose) on LinkedIn that originally it was just actual people joining Teams calls and taking notes. I'm sure there's nothing worrying about having people you don't know anything about listening in on confidential company meetings. Definitely not.

More recently an "autonomous agent" called Open Claw (the AI formerly known as Clawdbot and Boltbot) has been making headlines; it connects to messaging apps and uses applications for you, and maintains persistent memory. This makes use of other models rather than being an AI model itself, and the security considerations are pretty hefty since it has to log in to things to use them. It's open source and can be installed easily with a single script.

Then there's Large Language Models (LLMs) like ChatGPT, DeepSeek, Grok, Gemini, and so on, and their various sub-types.

In most cases, when you read a story about "AI" in the press, the last one is what they're talking about. Here's a whole Instagram Post about them and how they keep "trying not to be shut down".

Before you panic and run away screaming "the AIs are coming for us all! They won't let us shut them down!" it's probably worth considering that often these stories are released by the companies that create the models, and thinking for a minute about how they want you to think and feel about LLMs.

Are you being cynical?

Always. But you don't need to be cynical to see that they have skin in the game here. They want you to attribute agency to their "AI" models; they need people to think that these things are like tiny gods with their own personalities and motives.

The truth is far more prosaic. These things do not think; they do not have agency or desires or any kind of needs. They don't understand the concept of "being turned off" being like death. They're not Johnny 5 (ask your parents. Or grandparents).

LLMs work on the basis of "what word is most likely to come next according to my model?". You can think of it as statistical probability done at lightning fast speed; words are tokenized and then put back together in a way that's likely to make sense. (It's actually a lot more complicated than that, as I'm sure anybody involved in the AI space could tell you, but it's what might be called a "good lie" about how they work).

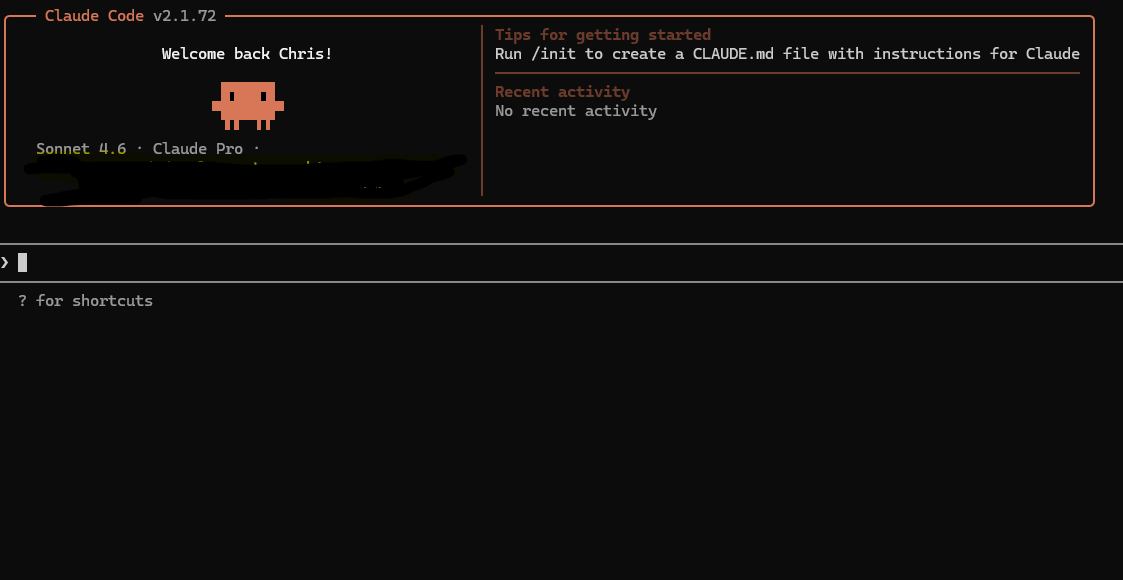

A screenshot of Claude Code in action.

So are LLMs any use?

Well. That's the question, isn't it? It really depends on what you want to use them for.

Let's be clear - there are enormous moral issues - AI companies seem to feel they should be permitted access to everything anybody has ever created to enable them to train their models, but there's clearly no way for an LLM to be truly creative; they can only create derivative works, based on what they've seen before (they may be able to see a lot and therefore create things that seem original).

With that said, in certain spheres where text generation is likely to be consistent (for example in software development) and fit specific patterns, they can provide productivity gains, under the guidance of experts (well, I would say that, wouldn't I?).

Here's an example: the other day I needed to analyse a large codebase for a client, but I had very little time to do it in. As an experiment, I subscribed to Claude Code for a month to get access to the new Opus 4.6 model. I pointed it at the code base and asked it to assess the whole thing.

The results were pretty spectacular; it crunched away for a while and produced a report that would have taken me the best part of a week in less than an hour. It piped it out to HTML and produced a nice-looking report with a list of critical, high-priority and low-impact potential bugs and security issues found in the code.

Following that, I used it to create a new feature for a project I was working on with a friend; it took a while to get it right (and it probably still needs some TLC), but as a way to generate an initial feature and even some quite complex interaction (the code in question used Blazor, an implementation of the Flux pattern called Fluxor, and mediatr, and honestly it did a pretty good job of it).

Anyway, that's enough of my rambling - a much more informed person than me is Dylan Beattie, and he has a whole series of YouTube videos on Claude Code, which you can find here:

Claude Code Part 1: What IS Claude Code, Anyway?

If you don't subscribe to him already, you should, just for his tech parody songs.